In This Blog

- Why Data Access Becomes the AI Bottleneck

- What MCP Actually Is

- Why Direct Integrations Don’t Scale

- What “Build Once, Use Anywhere” Actually Means

- How MCP Changes Enterprise AI Economics

- Security and Governance Considerations

- How the Enterprise AI Stack Connects Together

- Where MCP Fits in the Microsoft AI Stack

- When Organizations Should Start Thinking About MCP

- FAQ

TL;DR

Most organizations think scaling AI is primarily about choosing the right model. In reality, the bigger challenge is giving AI systems reliable, secure, governed access to enterprise data.

That’s where MCP, or Model Context Protocol, comes in.

MCP is an emerging open standard that creates a consistent way for AI agents to access systems, retrieve data, and take action across enterprise environments. Instead of building custom integrations for every model, application, and use case, organizations can create reusable MCP servers that any compatible AI system can interact with.

The result is a more scalable, governable, and maintainable AI architecture.

As organizations move from a handful of AI experiments to dozens of operational use cases, MCP has the potential to become one of the foundational layers that makes enterprise AI sustainable long term.

Why Data Access Becomes the AI Bottleneck

Right now, I think most AI conversations are centered around models.

Organizations compare GPT, Claude, Gemini, Llama, Copilot, open-source frameworks, and emerging agent platforms trying to determine which option will give them the biggest competitive advantage. While those decisions matter, they are rarely the biggest long-term obstacle.

The real challenge appears once organizations attempt to operationalize AI across multiple systems, departments, and workflows.

The issue is not generating responses. It is getting AI systems secure, governed access to the right enterprise data at the right time.

That becomes complicated quickly.

Most organizations need AI systems to interact with a growing mix of CRMs, ERPs, SharePoint environments, Microsoft Teams, file repositories, line-of-business applications, APIs, and proprietary systems. Without a standardized access layer, teams often start building custom integrations independently.

One team creates a Salesforce integration. Another creates a slightly different version several months later. A third builds another variation for a separate agent platform. Over time, organizations end up maintaining dozens of fragmented integrations spread across multiple projects and teams.

That creates hidden operational cost.

One of the biggest things I’ve been telling organizations lately is this:

“The model side is mostly a solved problem. What you can’t swap out in an afternoon is the forty integrations somebody wrote to let the model actually see your company’s data.”

The model layer may evolve rapidly, but the integration layer becomes the real engineering burden.

As organizations move beyond experimentation and begin scaling AI initiatives operationally, data access increasingly becomes the bottleneck that slows progress.

What MCP Actually Is

MCP stands for Model Context Protocol.

At its core, MCP is simply a standardized way for AI systems to communicate with external tools, systems, and data sources.

Instead of every AI application needing its own custom integration logic, MCP creates a common protocol both sides understand.

In practice, organizations create MCP servers that sit in front of enterprise systems and expose capabilities in a format AI agents can interpret. Those MCP servers may connect to Salesforce, ServiceNow, Microsoft Graph, SQL databases, SharePoint, Microsoft Fabric environments, internal APIs, or custom business applications.

Any AI agent or platform that supports MCP can then interact with those systems without requiring organizations to repeatedly rebuild integration logic.

I often compare it to ODBC for databases.

Years ago, ODBC standardized how applications connected to databases so developers no longer needed completely custom integrations for every database engine. MCP follows a similar pattern for AI systems.

Instead of every agent integrating directly with every system independently, organizations can move toward standardized MCP-compatible access layers.

That architectural shift becomes increasingly important as enterprise AI ecosystems become more complex.

Why Direct Integrations Don’t Scale

Before MCP, most organizations handled AI integrations manually.

Developers would write custom API calls, configure authentication, map responses, transform data formats, and build workflow orchestration logic independently for each use case.

At small scale, this seems manageable.

At enterprise scale, it becomes unsustainable.

Without standardization, organizations eventually create what amounts to integration sprawl. Multiple teams build duplicate connectors. Access controls become inconsistent. Logging standards vary from project to project. Governance becomes fragmented.

The problem compounds as organizations adopt more AI tools, copilots, agents, automation systems, and workflows.

I usually describe this as an “N times M” problem.

Without MCP, every new agent must connect individually to every system it needs to access. With MCP, organizations can build reusable access layers once and allow multiple AI systems to consume them.

That dramatically changes the long-term scalability equation.

The difference may not feel significant at the second integration. It becomes enormous at the fifteenth.

This is one reason many organizations successfully launch one or two AI pilots but begin struggling operationally as they attempt to scale broader AI programs.

The challenge often is not the AI itself.

It is the growing complexity underneath it.

What “Build Once, Use Anywhere” Actually Means

One of the most important concepts behind MCP is reusability.

Imagine an organization creates an MCP server that exposes customer data from a CRM platform.

Initially, that MCP server may support a sales copilot. Later, the same MCP server could support an operations agent, a Teams-based assistant, an analytics workflow, a reporting agent, or a mobile AI application.

Without rebuilding the integration layer each time.

That changes how organizations think about enterprise AI architecture.

Instead of every project starting from scratch, organizations can begin building reusable AI infrastructure.

The operational benefits become significant over time. Organizations can reduce engineering overhead, improve governance consistency, accelerate deployment timelines, and simplify long-term maintenance.

This is one reason MCP is attracting significant attention across the AI ecosystem.

The value is not just interoperability.

The value is operational scalability.

How MCP Changes Enterprise AI Economics

One of the clearest benefits of MCP is how it changes the economics of scaling AI.

Many organizations successfully launch one or two AI initiatives. The problems often emerge later.

As the number of use cases grows, integration costs rise, governance becomes fragmented, engineering overhead increases, and maintenance complexity expands rapidly.

This is where many enterprise AI programs begin slowing down.

Not because the AI failed.

Because the operational architecture underneath it became difficult to sustain. MCP helps reduce that complexity by creating reusable integration pathways. Instead of scaling integration work exponentially, organizations can scale more linearly.

That distinction matters.

Without MCP, every new AI tool introduces additional integration overhead. With MCP, organizations can often reuse existing access layers immediately.

This is one reason many technical leaders increasingly view MCP not as a niche protocol, but as foundational infrastructure for enterprise AI ecosystems.

Security and Governance Considerations

Security and governance become significantly more important as AI systems gain broader access to organizational data and workflows.

Without centralized access management, organizations often struggle with inconsistent permissions, fragmented logging, poor auditability, excessive credentials, and limited visibility into how AI systems are interacting with enterprise systems.

MCP introduces an opportunity to centralize many of those controls.

With a unified access layer, organizations can standardize authentication, apply centralized authorization policies, monitor AI activity more consistently, improve observability, and reduce credential sprawl.

That becomes especially important as organizations adopt AI agents and autonomous workflows capable of taking action rather than simply retrieving information.

Governance challenges increase dramatically once AI systems begin operating across environments independently.

This is where MCP begins intersecting naturally with technologies like Microsoft Entra, Microsoft Purview, Microsoft Defender, and broader zero trust architectures.

The more organizations operationalize AI, the more critical centralized governance becomes.

How the Enterprise AI Stack Connects Together

One of the reasons MCP is gaining so much attention is because organizations are no longer building isolated AI tools. They are building interconnected AI ecosystems that span data platforms, governance layers, productivity systems, AI agents, and enterprise applications.

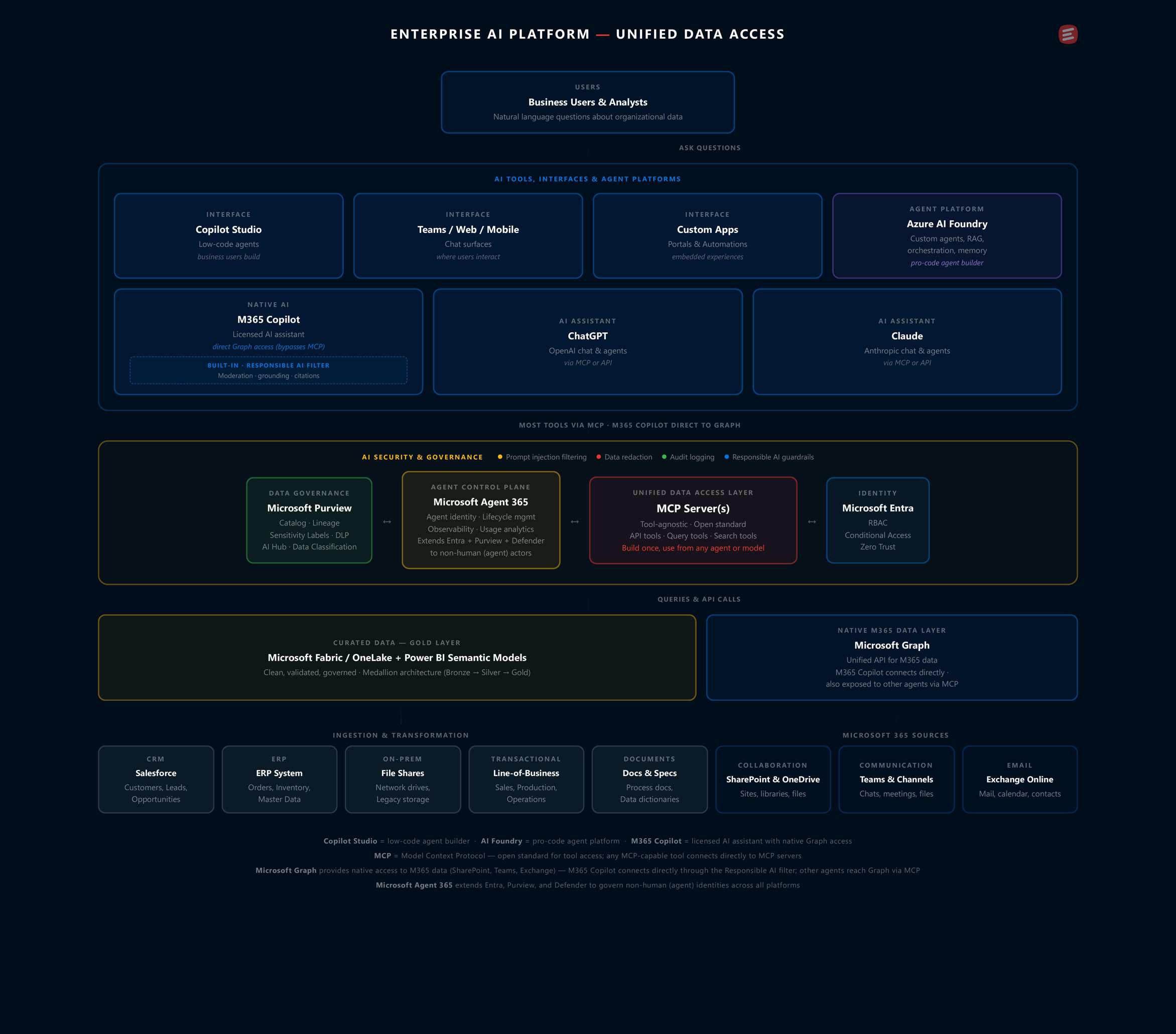

Within the Microsoft ecosystem, MCP acts as a connective layer between AI tools and enterprise systems. Instead of creating separate integrations for every agent or AI platform, organizations can expose governed, reusable access points that multiple AI systems can consume securely.

This architecture becomes increasingly important as organizations scale beyond a few AI pilots and begin operationalizing AI across departments and workflows.

The diagram below illustrates how MCP fits into a broader enterprise AI architecture alongside Microsoft technologies, governance layers, AI platforms, and enterprise systems.

Where MCP Fits in the Microsoft AI Stack

Within the Microsoft ecosystem, MCP fits naturally between AI systems and enterprise data sources.

Organizations may use Azure AI Foundry for orchestration, Microsoft Fabric and OneLake for data management, Microsoft Graph for Microsoft 365 access, Copilot as an end-user AI interface, Microsoft Entra for identity, and Microsoft Purview for governance and compliance.

MCP acts as connective tissue between those layers.

Rather than building isolated integrations repeatedly, organizations can expose systems through MCP-compatible interfaces that multiple AI systems can consume.

This creates a more modular and interoperable architecture while supporting stronger governance and long-term maintainability.

Microsoft’s growing support for MCP is also significant.

The broader AI ecosystem appears to be moving toward more open interoperability standards rather than tightly isolated integrations. Microsoft’s investment in MCP is a strong indicator that standardized AI connectivity layers are likely to become increasingly important moving forward.

When Organizations Should Start Thinking About MCP

One of the biggest misconceptions surrounding MCP is that organizations should wait until they are “larger” or “more mature” before thinking about it.

In reality, earlier adoption often creates less technical debt later.

Organizations frequently delay standardization until after multiple pilots, independent AI projects, and department-specific agents have already been built.

At that point, retrofitting governance and standardization becomes significantly harder.

My recommendation is pretty straightforward.

If an organization is already building its second meaningful AI use case, MCP should likely already be part of the conversation.

That does not mean every organization needs a massive MCP architecture immediately.

But introducing standardization early helps avoid rebuilding and refactoring later.

And as AI ecosystems continue evolving rapidly, flexible interoperability may become one of the most valuable architectural decisions organizations make.

The Bigger Picture

AI adoption is moving quickly, but most organizations are still in the early stages of operational maturity.

The current focus on models will eventually give way to broader conversations around governance, interoperability, orchestration, identity, observability, and scalability.

That is where MCP becomes strategically important. The organizations that scale AI successfully long term will likely not be the ones with the flashiest demos. They will be the ones building sustainable operational architectures underneath their AI ecosystems.

MCP has the potential to become one of the foundational standards that enables that shift.

FAQ

Is MCP a Microsoft technology?

No. MCP is an open protocol designed to standardize how AI systems access tools, systems, and data. However, Microsoft has increasingly embraced MCP within its broader AI ecosystem, particularly alongside Azure AI Foundry, Copilot, Microsoft Graph, and enterprise AI orchestration strategies.

Does MCP replace APIs?

No. APIs still exist underneath MCP. MCP acts as a standardized layer on top of those APIs that AI systems can interact with more consistently. Rather than replacing APIs, MCP helps abstract and standardize how AI agents use them.

Why does MCP matter for enterprise AI?

MCP helps organizations avoid building repetitive custom integrations for every AI tool, system, and use case. It creates a reusable access layer that improves scalability, governance, maintainability, and interoperability across AI ecosystems.

Is MCP only relevant for large enterprises?

Not necessarily. In many cases, introducing MCP earlier can help organizations avoid integration sprawl later. Organizations building multiple AI use cases or planning broader AI adoption may benefit from thinking about MCP sooner rather than later.

How does MCP relate to Microsoft Fabric and Azure AI Foundry?

Fabric and OneLake manage enterprise data. Azure AI Foundry supports AI orchestration and agent development. MCP helps connect AI systems to the tools, systems, and data sources they need to access. Together, these technologies form part of a broader enterprise AI architecture.

Is MCP production-ready today?

MCP is still evolving, and tooling continues maturing across the ecosystem. However, many organizations are already experimenting with MCP architectures and integrations today. Early adopters may gain meaningful advantages as the protocol becomes more widely adopted across enterprise AI platforms.